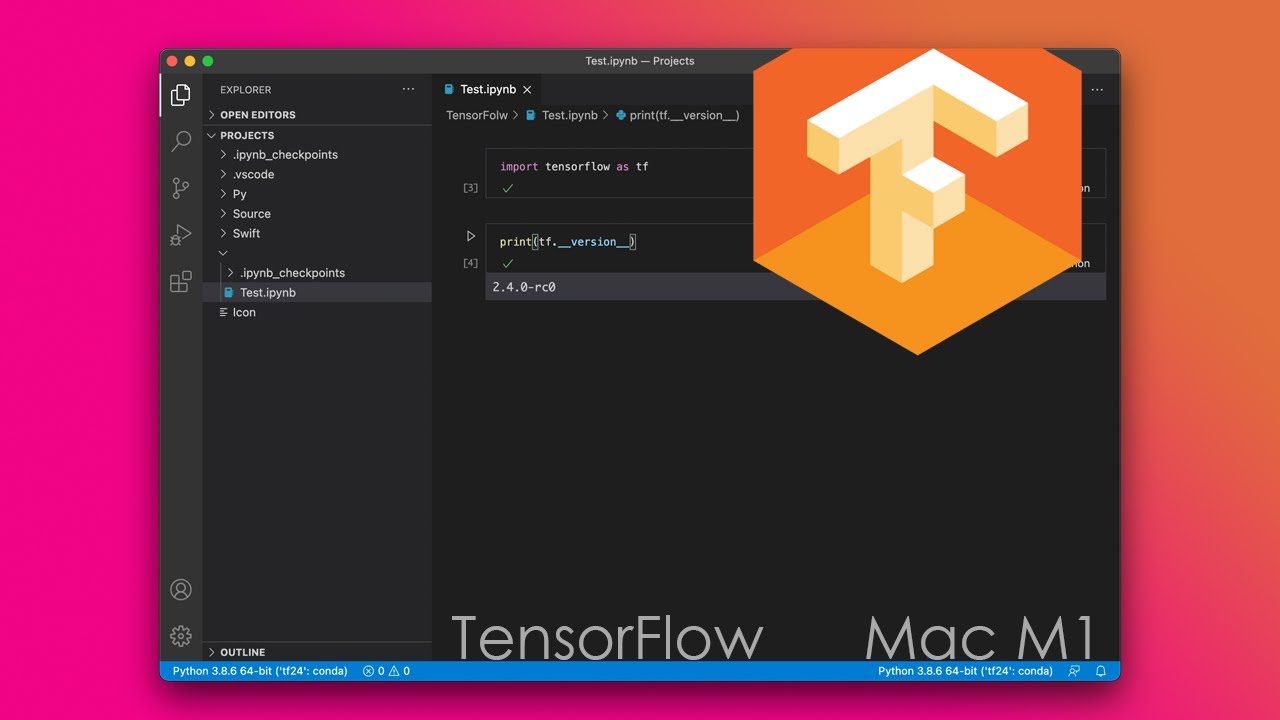

If just one out of potentially hundreds of packages doesn't play well with Rosetta there's nothing you can do about it. Many packages don't work in Rosetta, and you'll constantly get errors about your CPU not supporting required instructions or dynamically linked libraries missing from your system. If your use case doesn't require that, you should have few to no issues.ĮDIT: I've also seen people mention Rosetta environments, but really these don't work well at all in my experience. So to summarize, the issues I've had have been with packages that both require compiled binaries and are older or not well maintained. I had to pull out my Linux machine to get that running at all. Download the ARM64 version for Mac - the one marked on the image below: Image 1 - Miniforge download page (image by author) It will download an SH file. Another example was when I needed an older version of TensorFlow (1.4) for running an old repository, for which there are no M1 packages available. Enumerating the changes in the detailed release notes, the developers write that As of 3.9.1, Python now fully supports building and running on macOS 11.0 (Big Sur) and on Apple Silicon Macs. As of now, Miniforge runs natively on all M1 chips (M1, M1 Pro, M1 Max), so we’ll stick with it. For example when installing some packages related to Jax, dm-tree was installed, and its binary was broken for osx-arm64, requiring it to be installed through pip as a workaround. Even when they exist, they're often buggy. Any library that comes with binaries needs to be compiled and distributed for M1 (aka osx-arm64), which can take a while to be released, and are often just not available for older packages. When Apple with M1 was released, the integration with Tensorflow was very difficult. The chip uses Apple Neural Engine, a component that allows Mac to perform machine learning tasks blazingly fast and without thermal issues. I use miniforge3 (which is just miniconda3 with conda-forge as the default channel) a lot and come across issues quite often. Since Apple abandoned Nvidia support, the advent of the M1 chip sparked new hope in the ML community. It runs under rosetta2 though, and all of the py/conda side of the fence is arm64 native. On the other side of the fence, matlab's graphics performance is AWFUL on M1 and the whole matlab UI occasionally crashes. I have not found any fundamental compatibility problems. This is just conda install numpy from whichever channel it chooses to take it from. I made basically no effort to install conda/numpy this way, beyond setting that environment variable. Numpy shows some vector extensions are used, but not all of them: Supported SIMD extensions in this NumPy install:īaseline = NEON,NEON_FP16,NEON_VFPV4,ASIMD

So, MKL is better on intel chips than OpenBLAS is on ARM64. Also if you have M1 or M2 Mac, there are more packages compiled specifically for that chipset available on Conda as opposed to pip, which would use the. It became headlines especially because of its outstanding performance, not in the ARM64-territory, but in all PC industry. The dual Xeon 6248R server I ssh into to actually run most of my code does the same in 82ms (~7x faster, with 48 cores instead of 8 P cores). Macs with ARM64-based M1 chip, launched shortly after Apple’s initial announcement of their plan to migrate to Apple Silicon, got quite a lot of attention both from consumers and developers. The actual linear algebra part of numpy - matmul, and so on, is multi-core just as it is with the mkl backend (or openblas).Ī=np.random.rand(4096,4096) takes 598 ms on the M1 pro to do the matmul, and all cores light up in htop (including the E-cores). Some cases ( mkl_fft and _rand and so on) are slower on the M1, because no analog using apple accelerate or veclib, as best I can tell, exists. Numerics performance (numpy) is generally about on par with my previous 15" MBP with the last generation of intel i9s apple used. You need the env var CONDA_SUBDIR=osx-arm64 set in your shell rc file or elsewhere to make sure conda only uses native code. Steps include installing Java, Scala, Python, and PySpark by using Homebrew.I have a 16" M1 Pro machine, and use a commercial license of Conda.Īll of the packages that I particularly care about (numpy, scipy, astropy, skimage. In this PySpark installation article, you have learned the step-by-step installation of PySpark. Now access from your favorite web browser to access Spark Web UI to monitor your jobs. For more examples on PySpark refer to PySpark Tutorial with Examples. Enter the following commands in the PySpark shell in the same order.ĭata = Let’s create a PySpark DataFrame with some sample data to validate the installation. Note that it displays Spark and Python versions to the terminal.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed